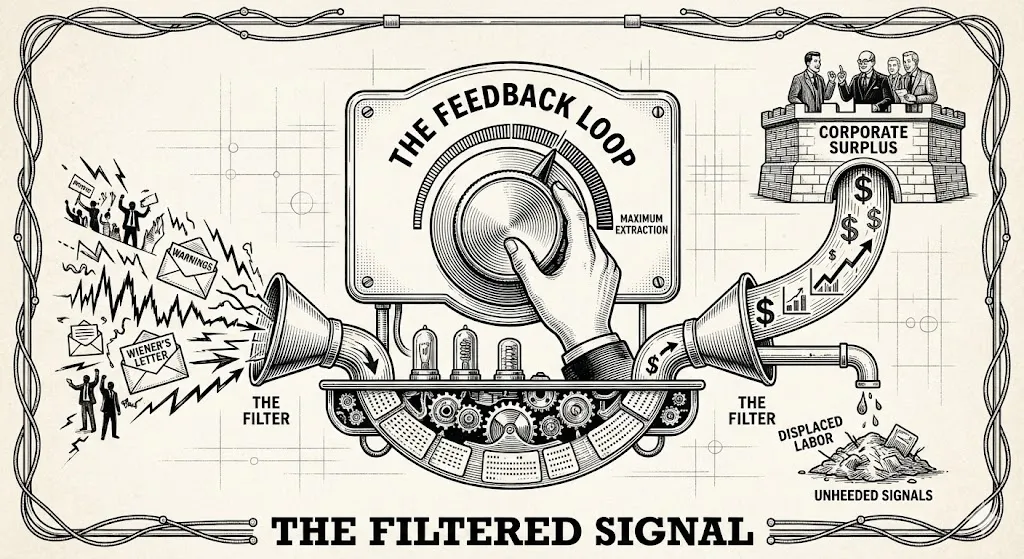

The Feedback Loop and the Filtered Signal

A short history of who controls the loop, from Norbert Wiener's letter to the age of AI.

Drones over Ukraine have made the sky so contested that anything airborne gets jammed and shot down — friend, foe, or civilian. There is now a serious proposal for drone air traffic control, because the machines are too numerous and too fast for anyone to track who sent them. The next step writes itself: replace the operator too, let the machine make the call. When making decisions autonomous becomes more valuable than keeping humans in control, you start to wonder if they ever were.

When you watch Anthropic resist Pentagon pressure to militarise its models, it’s hard not to think of Skynet. But this fear is not new and it was never science fiction. In my previous piece I wrote about Karel Čapek, who watched Europe industrialise around him, coined the word robot in 1920, and put the machine rebellion on stage before anyone else did. This piece is about the man who built the actual machines — and saw, more clearly than anyone, where they were going.

In 1949, a mathematician named Norbert Wiener wrote a letter to Walter Reuther, who ran America’s auto workers union and had built it into a political force by surviving beatings from Ford’s security men — the same factories that now, under different branding, call unions a parasitic class. Wiener had spent the war building targeting systems that predicted where a plane would be rather than where it was, feeding trajectory into anticipation. He saw, and almost nobody else did in 1949, that this didn’t stop at air defence — that a machine capable of anticipating motion could eventually replace judgement itself. Alan Turing was arriving at the same conclusion from the computational side: Turing asked whether a machine could think, Wiener asked how an intelligent system corrects itself, and together they had sketched something that didn’t yet have a name.

Wiener told Reuther what was coming and suggested labour should own the machines before the surplus flowed upward. Reuther never wrote back. It didn’t matter. The pattern doesn’t require warnings to be ignored — it just requires that whoever owns the technology at the moment of arrival captures the first surplus. The mainframe concentrated power in institutions. The personal computer redistributed creative capability into individual hands, and within twenty years had been absorbed into a platform economy that concentrated power more efficiently than the mainframe ever had. The feedback loop always corrects eventually. It just flows upward first.

The Thermostat: Who sets the target temperature?

The Thermostat

To understand what Wiener thought he had found, start with a thermostat. It measures temperature, compares it to a target, and adjusts the system — sense, compare, correct, repeat. Wiener’s insight was that this loop is the basic unit of all intelligent behaviour: a hand reaching for a glass, a predator tracking prey, a corporation adjusting prices. He called the science of these loops cybernetics, from the Greek for helmsman, and his 1948 book on the subject was an unlikely bestseller, giving the mid-century scientific world a new vocabulary for what it was building.

He named the specific danger cybernation — not just automation of muscle, which the industrial revolution had already delivered, but automation of mind. The targeting systems he’d built for the war were primitive ancestors of something that could learn, and if machines could learn, the judgement and pattern recognition that had always been labour’s last refuge would stop being a refuge. He considered stopping his research. He decided against it, concluding that if he didn’t develop it, someone with less conscience would. That reasoning — if not me, someone worse — is now the governing psychology of an entire industry.

The Panic That Was Right

By 1964 the fear had become impossible to ignore. Automated production lines were replacing assembly workers. Institutional computers were beginning to process the work of clerks and accountants. Thirty-five intellectuals, including two Nobel laureates, sent an open letter to President Johnson called the Triple Revolution, arguing that cybernation was severing the central assumption of the economy: that income came from work. If machines could produce goods with a fraction of the human labour previously required, the gains would flow to whoever owned the machines. Workers would have nothing left to sell.

Their prescription was precise: a guaranteed income decoupled from employment, an excess profits tax on corporations capturing the cybernation surplus, government authority to regulate the speed of automation. Not a rejection of the technology — an insistence that the political question of what to do with the surplus be answered deliberately. Johnson read it, convened a commission, and the commission recommended job training programmes instead. The Triple Revolution had asked what happens if work becomes structurally scarce. Johnson’s commission answered by assuming it wouldn’t.

Martin Luther King kept returning to the Triple Revolution’s framing in his final years, supporting a guaranteed income as a matter of economic justice rather than charity. In his last Sunday sermon — six days before he was shot — he referenced the thesis directly. The connection between racial justice and economic redistribution was clear to him in a way it wasn’t to most of the white liberal coalition. The loop wasn’t being allowed to correct there either.

The panic crested and apparently subsided. The government became bigger, employment rose, the service sector expanded, the personal computer arrived looking like vindication. What had actually happened was that displaced workers had been absorbed into a new layer of employment that generated the appearance of prosperity while wages decoupled from productivity through the 1970s. The surplus was captured, as it had always been captured, slowly enough that the political alarm didn’t sound. Then around 1979 the hinge turned. The moment the wage-productivity gap became visible was the moment the political response moved in the opposite direction: top tax rates cut, unions weakened, shareholder value enthroned as the governing ideology of the economy. The question had been asked correctly in 1964. The answer in 1980 was a deliberate choice at a moment when a different answer was still possible.

The Loop the Soviets Couldn’t Close

When Wiener published his book, the Soviet Union declared cybernetics a reactionary pseudoscience — a capitalist fantasy of replacing class-conscious workers with obedient machines. The critics had not read the book. They were working from a summary in an American magazine. After Stalin died in 1953 the reversal was total, and the irony severe enough to generate a national joke: They told us before that cybernetics was a reactionary pseudoscience. Now we are firmly convinced that it is just the opposite: cybernetics is not reactionary, not pseudoscience, and not a science.

The real problem was structural. Soviet economists proposed OGAS — a nationwide economic computing network gathering real-time data from factories and farms across the entire union, feeding it into central planning. An internet of economic feedback, decades before the internet existed. It was technically feasible and politically impossible. The command economy ran on information flowing downward. OGAS required it to flow upward and sideways — factories reporting real capacities rather than the inflated figures that made local managers look good, ministries sharing data across bureaucratic boundaries. Every ministry had an incentive to kill it. They did. The Soviet Union eventually abandoned developing original computer technology and began cloning Western machines instead.

The technology meant to optimise the command economy was incompatible with the command structure. You cannot build a self-correcting system if the people at the top cannot tolerate correction. China is running the same experiment now at higher speed. DeepSeek distilling OpenAI’s models is structurally the same move as Soviet engineers cloning IBM machines: recognise the technology is real, catch up through imitation, then face the question of whether the political system is compatible with what the technology actually requires. Frontier AI seems to need open information flows, independent researchers with genuine freedom to report what they find, and institutional willingness to receive unwelcome signals. Whether China can build that without becoming less authoritarian is the most consequential unresolved question in technology right now.

The Same Letter, Written Again

The AI researchers sending open letters about existential risk are running the same loop Wiener ran in 1949. They are building the tools, frightened of the tools, writing the letters. Senior researchers at major laboratories have resigned, published warnings, given anonymous briefings to journalists. Some stay inside companies they believe are moving too fast, on the theory that their presence makes the outcome less dangerous. Some leave and start competing companies on the same theory. The loop of conscience keeps generating signals. The institutional structure keeps filtering them out.

The “don’t be evil” motto Google adopted in 1998 and gradually abandoned was not simple hypocrisy. It was an aspiration that ran into structural reality. The company that can afford ethics is the one that hasn’t yet fully optimised for returns. As the optimisation intensifies, the ethics function gets reclassified from asset to overhead. When the Pentagon asks an AI safety company to make its models available for military targeting, the decision isn’t made by the researchers who wrote the safety guidelines. It’s made by the people who own the company and need the contract. The thermostat is being reset by the same hands that always reset it.

What The Loop Is Missing

The sheep metaphor from the previous piece was about displacement — surplus moving in one direction while people moved in another. The feedback loop is about something more specific: what signals get processed, what gets filtered, and who controls the target temperature.

Technological transitions have always signaled the distress of the displaced — from the early outcry of handloom weavers and the prophetic letters of Norbert Wiener to the later, more formal declarations of the Triple Revolution. AI researchers are sending them now. The signals have always been legible. The loop has always been structured to filter them out — not through conspiracy, but because the people who own the technology set the correction targets toward their own interests.

Wiener called his popular book The Human Use of Human Beings. What he meant was technical: a feedback system needs its components doing the work they’re suited to. A human being used as a servo-mechanism — performing tasks a machine could perform, denied the judgement that distinguishes human cognition — is a system running at catastrophic inefficiency. The argument the Triple Revolution was circling, and that Johnson’s job-creation answer failed to reach, was that it isn’t enough to ensure people have work. The work has to be the right use of people. An economy that generates full employment through an ever-expanding layer of coordination, and the coordination of that coordination, is not a success. It is a thermostat generating the appearance of warmth without heating the room.

If AI is genuinely capable of compressing that layer — dissolving the abstraction economy accumulated over decades of financialisation — we face the same choice that was on the table in 1964. Not which jobs will survive, but what we actually want to produce, for whom, and on what terms. That is not a technological question. It cannot be answered by a better model or a more responsible governance structure. It is a question about who sets the temperature. And the thermostat, right now, is in the same hands it has always been in.

Wiener sent the question to Reuther in 1949. Johnson received it in 1964 and answered something easier. The letter is being written again, at orders of magnitude greater speed and stakes. The loop is ready to correct. It is waiting for someone to set it toward something other than maximum extraction — and so far, in four hundred years of this pattern, that has never happened without sustained political pressure forcing the question into the arena and keeping it there long enough to matter.

This is the second in a series on the long history of who benefits when machines do the work. The first piece, The Sheep and the System, traced the line from the Enclosure Acts to the present. The next piece will explore Player Piano — Kurt Vonnegut’s 1952 novel about full automation and what it does to the humans left behind.