The Piano Plays Itself

A short history of what happens when the mechanism runs the logic and the human in the loop becomes a formality.

On February 28, 2026, a missile struck the Shajareh Tayyebeh girls’ elementary school in Minab, Iran. Between 168 and 180 people were killed, most of them children. The site had once been part of a Revolutionary Guard complex. It had been a school for nearly a decade. The AI targeting system either didn’t know this or didn’t weight it heavily enough. The human who authorised the strike had roughly twenty seconds to verify the target before signing off. Nobody made a decision, exactly. The machine ran the logic it was given. The human completed the gesture. The children were already dead before anyone thought to ask what the school used to be.

A few weeks earlier, a different kind of gesture was being performed in Washington. A young government official, executing an executive order to cut programmes related to DEI, was asked under deposition to explain what DEI meant. He couldn’t. He admitted the review had been conducted using ChatGPT, processing programme descriptions automatically, flagging targets for cuts the official hadn’t read and couldn’t characterise. Real programmes, real people, real consequences — run through a language model by someone who had been given power without understanding and a tool without judgment, producing decisions nobody quite made.

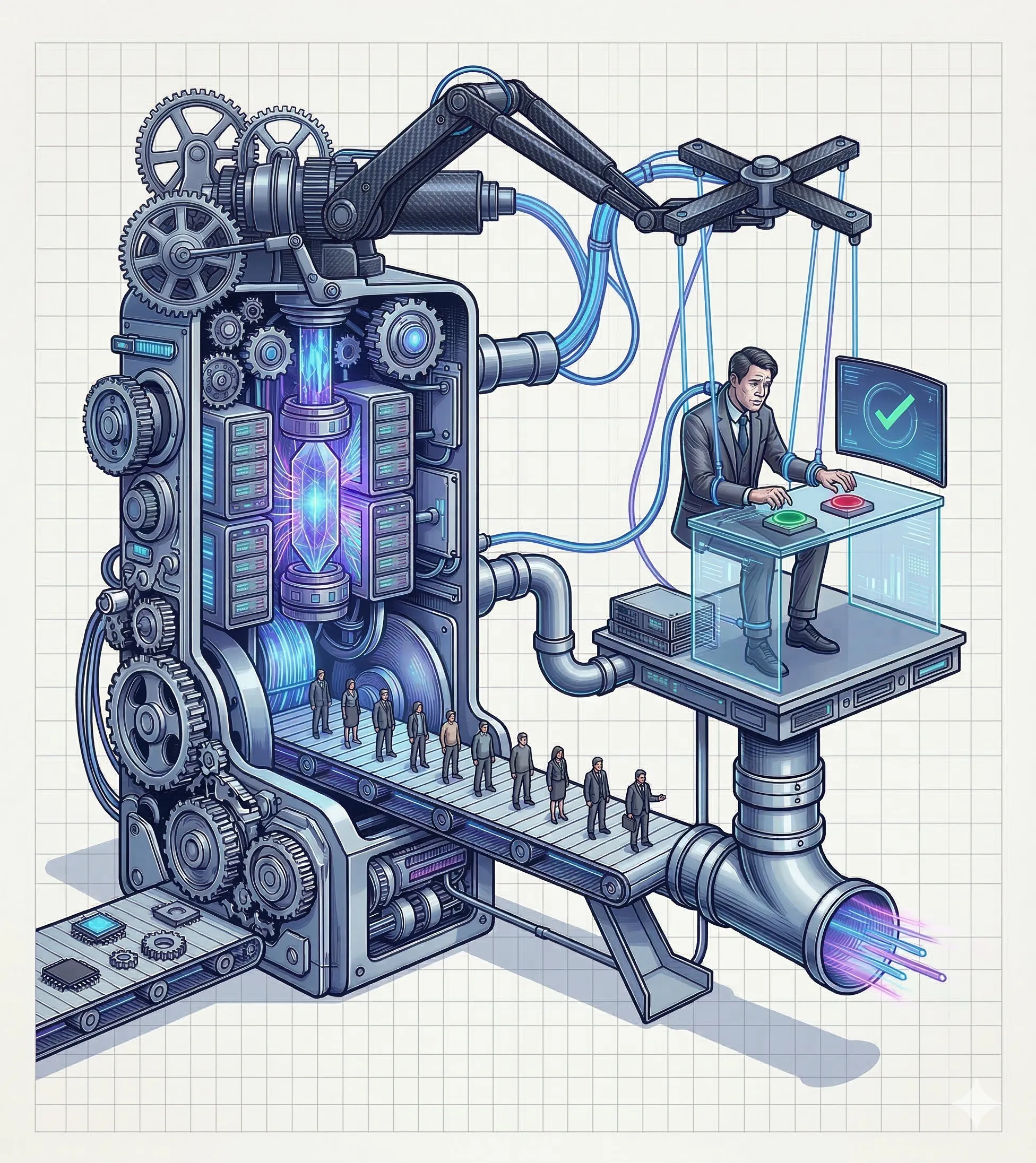

The same mechanism. Different stakes. A human in the loop, performing the gesture of decision, while the machine runs the logic. This is not a story about bad actors or rogue algorithms. It is a story about what happens when the instrument plays itself — and the people operating it mistake the sound for music they composed.

The Human in the Loop: Completing the gesture while the mechanism runs the logic.

The Book Nobody Wanted To Publish

In 1952, Kurt Vonnegut published his first novel. He had been working at General Electric in Schenectady, and one afternoon he watched an engineer record the movements of a skilled machinist onto a punch card, then feed the card back into the milling machine. The machine reproduced every motion with perfect precision. The machinist watched his own hands become redundant in the time it took to run the card. Vonnegut went home and wrote a book about it.

Player Piano was rejected by every publisher who understood it, which was most of them. It was eventually published by Doubleday as a science fiction novel — a category it was placed in because the future it described was too uncomfortable to shelve as realism. It is not science fiction. It is a description of something Vonnegut watched happen to one man, extended forward with merciless logic.

The world of the novel is America after a third world war. Automation solved the production crisis while the workers were at the front, and the engineers who built the machines never gave the jobs back. Society has split into two classes. On one side: the engineers and managers who design and run the machines, defined entirely by their test scores, their credentials, their place in the hierarchy of technical competence. On the other: everyone else, offered a choice between the army or the Reeks and Reclamation Corps — make-work that exists purely to give people something to occupy their hands while the machines do everything that matters.

There is material comfort. There is no purpose.

A visiting Shah tours this society as a diplomatic guest. His translator struggles with one word he keeps repeating — a word from his own language meaning slave. Every time an official explains the freedom and dignity of American life, the Shah listens politely, looks at the nearest citizen, and says the word again. He is not being provocative. He is being precise. He can see, from outside the system, what the people inside it cannot: that the relationship between their effort and their lives has been severed, and that severing is what his word describes, regardless of the living standards attached to it.

The novel’s revolutionaries call themselves the Ghost Shirt Society, after the Lakota Sioux’s last spiritual resistance before Wounded Knee — shirts they believed would stop bullets. The name is not hopeful. The revolution happens. The machines are destroyed. And in the final scene, the revolutionaries are already in the rubble, instinctively, almost tenderly, beginning to rebuild them. The loop closes. The piano winds itself back up.

The Wrong Nightmare

When the Triple Revolution authors wrote to Johnson in 1964 — the letter we traced in the previous piece — their nightmare was Vonnegut’s: mass uselessness, structural unemployment, people with nothing left to sell. Johnson’s commission answered with job training programmes, and for a generation it looked like the pessimists had been wrong. Employment rose. The service sector absorbed the displaced. The personal computer arrived and looked like liberation.

What nobody predicted — what Vonnegut’s novel didn’t quite reach — was a different nightmare. Not uselessness. Its opposite.

A recent wave of workplace studies describes what AI is actually doing to knowledge work: not replacing it but intensifying it. More context-switching, more responsibility concentrated in fewer people, higher expectations per employee, faster throughput, thinner margins for error. The worker isn’t made redundant. The worker is made into a hub — a coordination point between systems too complex and too fast for any one person to fully understand — and told this is empowerment.

Skill atrophy is now a recognised occupational hazard. Radiologists who rely on AI triage gradually lose the ability to read films without it. Programmers who lean on code generation lose the muscle memory of building from first principles. Writers who draft with AI assistance find, months later, that the unassisted sentence comes slower, feels stranger, sits less naturally in the hand. The skill doesn’t disappear with a bang. It drains quietly, unnoticed, until the day the tool is unavailable and the human reaches for an ability that is no longer quite there.

This is not the player piano replacing the pianist. This is the player piano training the pianist to operate its levers — faster, more smoothly, with greater apparent productivity — until the pianist can no longer play without it, and can no longer quite remember what playing was.

The Projectionist, The Spectator, The Roll

Vonnegut’s player piano is a mechanism that produces music without anyone playing. The keys move, the hammers strike, the sound is real. But the performance is a recording. What the current moment has added is a third layer of confusion: the people operating the mechanism believe they are composing.

The deposition video circulated because it was funny. A young official, given genuine power over genuine programmes, using a chatbot to process decisions he couldn’t explain. But the laughter covered something more unsettling: he was not unusual. He was representative of a new class of knowledge worker whose job is to mediate between systems they don’t understand, producing outputs they didn’t originate, at a speed that precludes reflection. The content creator whose aesthetic is indistinguishable from the model’s defaults. The analyst whose strategic insight is a reorganised prompt. The official who cut programmes by pressing play and called it governance.

They are projectionists — running the mechanism, experiencing the authorship, producing nothing they made.

The audience is no different. AI-generated text lacks the tells that visual and audio generation cannot hide — the recurring faces, the morphing hands, the rhythmic flatness of synthesised voice. Text patterns exist but are less legible to the casual reader, which means the spectator of generated writing is less equipped to know what they are watching than the spectator of generated images. They sit in front of the screen while the piano plays and hear music that sounds composed.

And underneath both: the piano roll itself. Pre-encoded, unaware, playing the sequence it carries. This is not a metaphor for the AI. It is a metaphor for the worker who has been incorporated into the system fully enough that their responses are predictable outputs of a process, not judgements of a person. The warehouse worker of the knowledge economy. Fast, responsive, handling apparent complexity, owned by the sequence. Vonnegut imagined this class in his Reeks and Reclamation Corps — make-work for the displaced. The actual version is more insidious: make-work that looks like real work, measured and monitored and rewarded, producing outputs that feed back into a system whose purpose nobody in it can fully articulate.

The Borg Didn’t Have A Plan

In the previous pieces, the surplus always went somewhere identifiable. The enclosure enriched the landowners. The factory system enriched the industrialists. The platform economy enriched the platform. You could name the beneficiary, trace the flow, identify the thermostat and the hands that set it.

The current moment is harder to map. SaaS companies — the infrastructure layer of the knowledge economy, the software that mediated between workers and tasks for three decades — are beginning to dissolve into AI tools generated internally, on demand, by organisations that no longer need to buy coordination software because they can instantiate it. The software ate the world, and now something is eating the software. The flows of meaning and value are moving through channels that nobody commissioned and nobody fully monitors, in organisations so abstracted from their own ground truth that the question of what the work is actually for has become genuinely difficult to answer.

This is not extraction in the old sense. The landowner knew he was extracting. The factory owner knew he was capturing surplus. The platform knew it was monetising attention. The current system is running a logic that has become, in a meaningful sense, self-executing. The targeting AI that struck the school was not malicious. The chatbot that cut the DEI programmes was not cynical. They were mechanisms running the instructions they had been given, by people who had been given power they didn’t understand, inside systems whose purpose had been decided several organisational layers above anyone who could see the consequences.

Nobody is enjoying the music. The piano is playing because it was wound up, and the room it’s playing in has been empty for longer than anyone noticed.

Vonnegut’s Real Question

The line at the centre of Player Piano — the one Vonnegut said the whole novel was trying to reach — was this: how do you love people who have no use?

Not how do you employ them. Not how do you compensate them or retrain them or help them find new roles in the emerging economy. How do you love them. It is a moral question that all the economic answers have consistently failed to reach, because economic answers treat the problem as resource allocation and the real problem is meaning.

The enclosure removed people from direct relationship with the land that fed them. The industrial revolution removed them from ownership of their craft. The platform economy removed them from ownership of their audience and their data. The current moment is removing something harder to name: the sense that the work one does is the work of a person, traceable to a judgement, owned by a mind, distinct from what the machine would have produced in the same situation.

When the machinist at General Electric watched his hands be encoded onto a punch card and played back by a machine, he lost his livelihood. What Vonnegut understood, watching him, was that he lost something else first — the sense that the movement of his hands was his. That the thing he knew how to do was knowledge he held, not a pattern the machine could carry.

The skill atrophy is real and measurable. The AI psychosis — the documented tendency to confuse interactive consumption with creation, to experience the model’s output as one’s own thought — is real and spreading. The deposition video is real and not aberrant. These are not separate phenomena. They are the same phenomenon: people losing the thread back to their own judgement, gradually, in ways they don’t notice until the thread is gone, inside systems moving too fast for the loss to register as loss.

The piano plays itself. The question Vonnegut was asking in 1952 — and that nobody in the room with the machinist thought to ask — was not whether the machine could do the job. It was whether the job, once the machine could do it, still meant anything to the person who used to.

We are living inside that question now. We have not begun to answer it.

This is the third in a series on the long history of who benefits when machines do the work. The first piece, The Sheep and the System, traced the line from the Enclosure Acts to the present. The second, The Feedback Loop and the Filtered Signal, followed Norbert Wiener’s warning from 1949 to the autonomous drones of today. The next piece will look at the present moment: the self-enclosure, the willing pod, and what it means that the last commons being fenced off is interior.